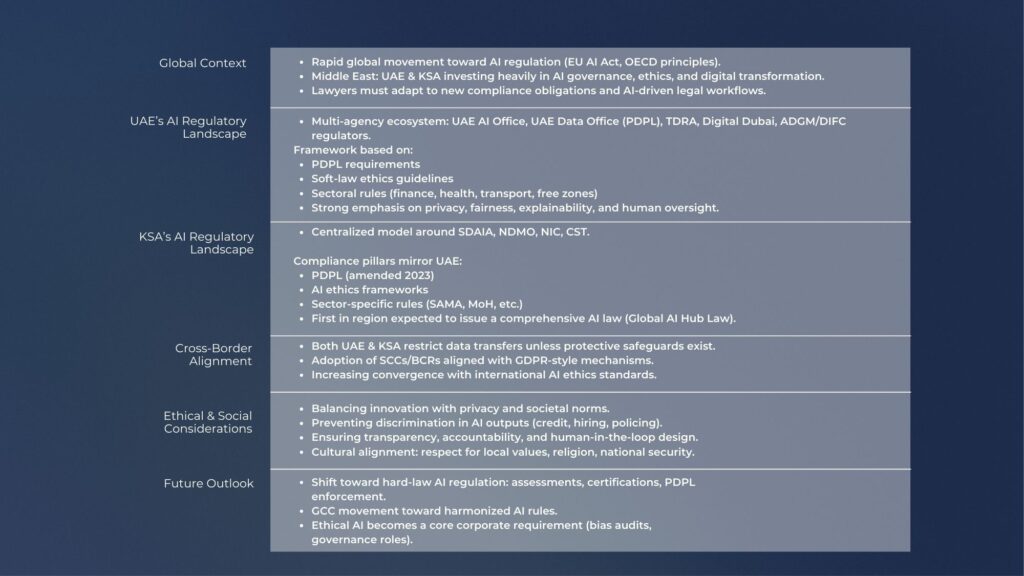

Saudi Arabia has placed artificial intelligence at the centre of its digital transformation strategy, positioning the technology as a key driver of economic diversification, innovation and productivity. The announcement of 2026 as the Year of Artificial Intelligence reflects the Kingdom’s commitment to accelerating adoption across industries while strengthening governance frameworks that ensure responsible and secure deployment. For businesses operating in or entering the Saudi market, artificial intelligence presents both significant opportunities and legal considerations that must be carefully addressed.

Artificial Intelligence as a Catalyst for Business Innovation

Artificial intelligence is already transforming sectors such as healthcare, finance, logistics, retail and energy. Advanced analytics, automation, and machine learning systems are enabling companies to improve operational efficiency, enhance decision-making, and create new products and services. Saudi Arabia’s national digital strategy encourages businesses to integrate AI-driven technologies to increase competitiveness, attract investment, and support the development of a knowledge-based economy. Organisations that successfully integrate AI technologies can unlock new forms of value through automation, predictive analysis, and intelligent digital services.

Data Governance and Regulatory Compliance

One of the most important legal considerations for businesses deploying artificial intelligence relates to data governance. AI systems depend on large volumes of data for training, analysis, and continuous improvement. The Saudi Personal Data Protection Law establishes rules governing the collection, processing and transfer of personal information. Companies using AI applications must ensure that personal data is processed lawfully, transparently, and for legitimate purposes. Organisations must implement strong data security measures, obtain appropriate consent where required and comply with restrictions on cross-border data transfers. For businesses developing AI solutions, compliance with data protection rules is essential for maintaining public trust and avoiding regulatory penalties.

Intellectual Property Protection in AI Development

Intellectual property protection plays a central role in AI innovation. Algorithms, software models, and data-driven solutions represent valuable intangible assets that contribute to long-term commercial value. Businesses developing proprietary artificial intelligence tools must consider how to protect these assets through copyright, patents and trade secrets where applicable. At the same time, organisations must ensure that the datasets and software components used to train AI systems do not infringe third parties’ intellectual property rights. Proper licensing arrangements and careful due diligence are necessary when integrating external data sources or third-party technologies.

Accountability and Responsible Use of AI

Another important legal issue concerns accountability and liability in automated decision-making. AI systems can influence a wide range of commercial activities, including credit decisions, healthcare diagnostics, customer service interactions, and supply chain management. Businesses must ensure that automated processes remain transparent and that human oversight mechanisms are maintained where necessary. Regulatory authorities are increasingly focused on ensuring that AI systems operate in a fair, explainable, and responsible manner. Organisations deploying AI solutions should therefore implement governance frameworks that include risk assessments, monitoring procedures and internal policies addressing algorithmic bias and ethical considerations.

Cybersecurity and Digital Infrastructure Protection

Cybersecurity is closely connected to the adoption of artificial intelligence. AI systems often operate within complex digital environments that process sensitive information and interact with critical infrastructure. Businesses must ensure that AI platforms are protected against cyber threats, data breaches, and unauthorised access. Robust cybersecurity policies, encryption standards and incident response procedures are essential to safeguard both corporate and consumer data. In Saudi Arabia, cybersecurity compliance is reinforced by national policies and sector-specific regulatory requirements designed to protect digital infrastructure.

Artificial Intelligence and Future Business Opportunities

Despite the legal challenges, artificial intelligence offers extraordinary opportunities for business innovation. AI-powered analytics can enable companies to predict market trends, optimise logistics networks, and improve customer engagement through personalised services. In manufacturing and energy sectors, intelligent systems can enhance efficiency through predictive maintenance and advanced operational monitoring. In healthcare, AI-supported diagnostic tools and data analysis platforms have the potential to improve patient outcomes and support medical research. The integration of artificial intelligence into business models is therefore not only a technological development but also a strategic advantage in a rapidly evolving global economy.

Saudi Arabia’s regulatory approach aims to balance innovation with responsible governance. Institutions such as the Saudi Data and Artificial Intelligence Authority are actively developing policies, ethical frameworks and technical standards to guide the safe and effective use of AI technologies. By creating a clear regulatory environment, the Kingdom is encouraging businesses to invest in research, development and deployment of artificial intelligence solutions while ensuring that digital transformation aligns with national values and legal standards.

For businesses operating in Saudi Arabia, successful adoption of artificial intelligence requires both technological capability and legal awareness. Companies must integrate compliance considerations into their AI strategies from the earliest stages of development. This includes ensuring that data practices, intellectual property management, cybersecurity measures, and governance frameworks meet regulatory expectations. By adopting a proactive legal approach, organisations can unlock the full potential of artificial intelligence while minimising risk and maintaining regulatory compliance.